The Rocky Road to Python’s JIT Future

I’ve been following Python’s JIT development since the initial announcements, and honestly? It’s been a rollercoaster. The project hit serious roadblocks earlier this year when key contributors disagreed on implementation approaches. What looked like Python’s breakthrough moment suddenly felt like another false start.

Here’s the thing that makes this different from previous attempts: the core team isn’t trying to bolt on JIT as an afterthought. They’re rebuilding fundamental pieces of the interpreter to make JIT compilation a first-class citizen. That’s ambitious, but it’s also why progress has been so stop-and-start.

What’s interesting here is how the community rallied around the project when it looked like it might stall indefinitely. Major corporate Python users—think Instagram, Dropbox, YouTube—started throwing serious engineering resources at the problem. When your biggest users are literally paying engineers to make your language faster, you know you’re onto something important.

Performance Gains That Actually Matter

Let’s talk numbers, because that’s what really matters here. Early benchmarks from the development branch show 2-5x performance improvements on compute-heavy workloads. That’s not revolutionary like what V8 did for JavaScript, but it’s substantial enough to change Python’s position in the performance conversation.

I think the real win isn’t raw speed—it’s consistency. Python’s performance has always been unpredictable. Some operations are blazing fast thanks to C extensions, others crawl because they hit pure Python bottlenecks. JIT compilation smooths out those rough edges by optimizing hot code paths automatically.

The machine learning community is particularly excited, and I get why. PyTorch and TensorFlow already use their own JIT compilers for model execution, but having native JIT in the Python interpreter means everything else gets faster too. Data preprocessing, visualization, even just loading and manipulating large datasets—all of that benefits from interpreter-level optimization.

What surprises me is how much this could impact web development. Django and Flask applications have always been CPU-bound rather than I/O-bound in many real-world scenarios. Faster Python means higher request throughput without architectural changes. That’s huge for teams that can’t easily migrate to Go or Rust.

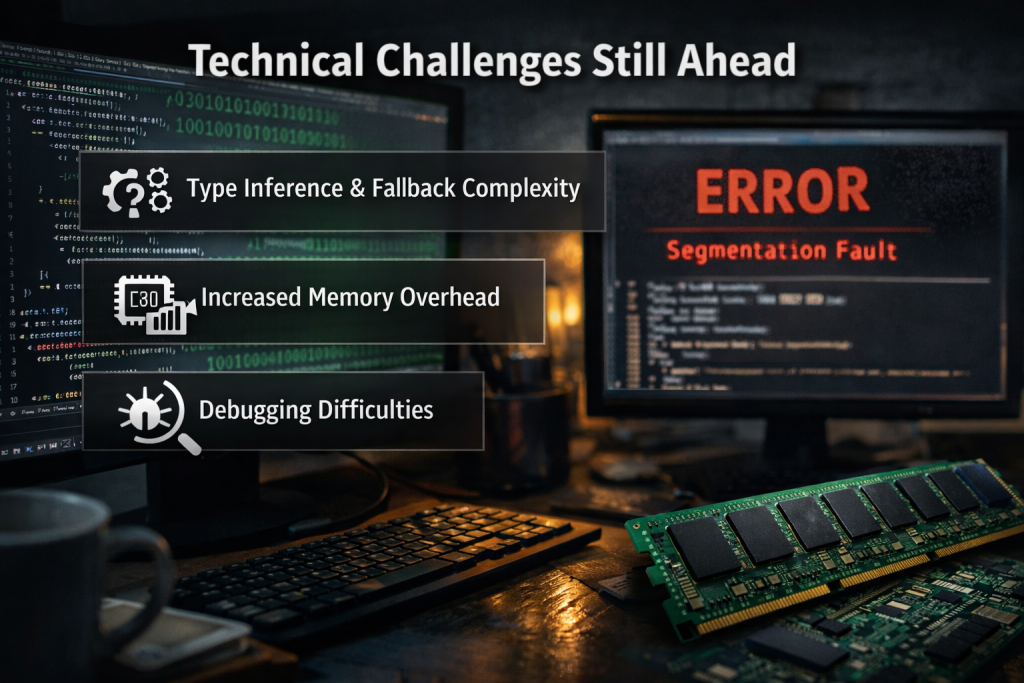

Technical Challenges Still Ahead

Don’t get me wrong—this isn’t a done deal yet. JIT compilation for dynamic languages is genuinely hard, and Python’s flexibility makes it even harder. The interpreter needs to make optimization decisions without complete type information, then gracefully fall back when those assumptions prove wrong.

Memory usage is another concern that’s not getting enough attention. JIT compilers generate optimized machine code, but that code has to live somewhere. Early reports suggest memory overhead of 15-30% for applications that benefit most from JIT optimization. That’s manageable for most use cases, but it matters for memory-constrained environments.

The debugging story also needs work. When your Python code gets compiled to optimized machine code, traditional debugging tools start to break down. Stack traces become less reliable, profiling gets more complex, and stepping through code in a debugger doesn’t always work as expected.

Here’s what I’m watching closely: backward compatibility. Python’s strength has always been its stability and predictability. If JIT compilation introduces subtle behavioral changes or breaks existing code, the community won’t adopt it regardless of performance gains. The core team knows this, but execution is everything.

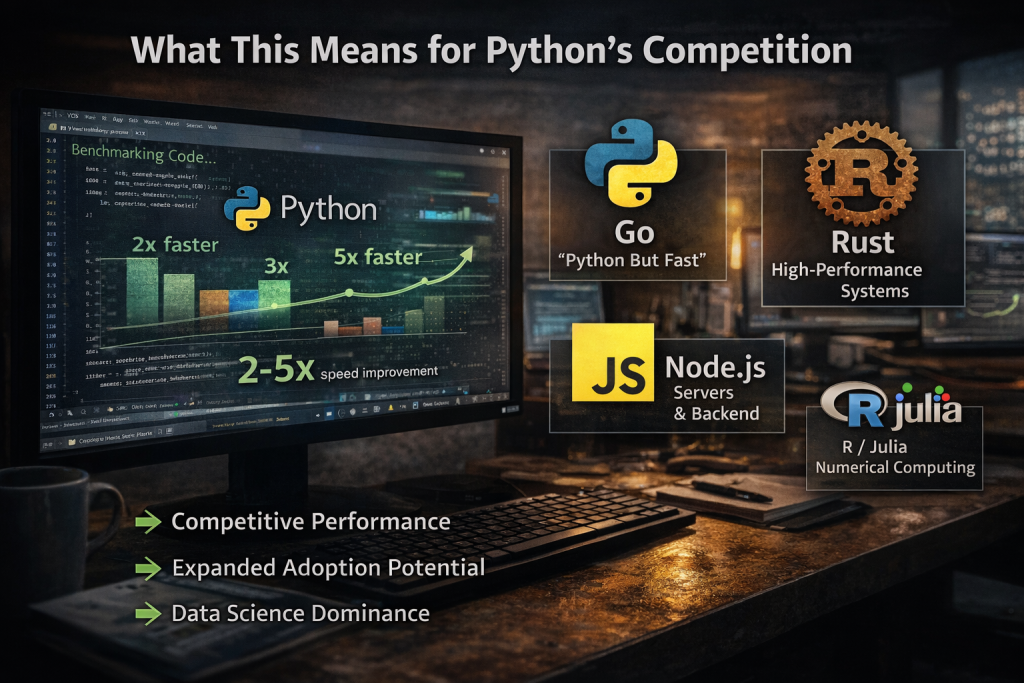

What This Means for Python’s Competition

Python’s performance problems have been a gift to competing languages for years. Go marketed itself as “Python but fast.” Rust advocates point to benchmarks showing 100x speed differences. JavaScript developers love to mention that Node.js often outperforms Python web servers. A successful JIT implementation changes that narrative completely.

I think the biggest impact will be on adoption decisions rather than migration away from existing languages. Teams choosing between Python and alternatives for new projects won’t automatically rule out Python for performance reasons. That’s a huge shift in the enterprise software landscape.

The data science ecosystem is where Python really strengthens its position. R and Julia have made inroads by offering better performance for numerical computing, but Python’s ecosystem and tooling advantages are massive. Faster Python execution makes it even harder to justify switching to more specialized languages.

What’s really interesting is how this affects JavaScript’s server-side story. Node.js succeeded partly because JavaScript’s V8 engine is incredibly fast. If Python gets comparable JIT performance while maintaining its superior standard library and cleaner syntax, that’s a compelling alternative for backend development.

Python 3.15’s JIT development getting back on track isn’t just good news for Python developers—it’s potentially transformative for the entire programming language ecosystem. If the core team can deliver on the performance promises without sacrificing Python’s legendary ease of use, we’re looking at a language that finally closes the gap between developer productivity and runtime performance. That’s been the holy grail of programming language design for decades, and Python might just be the first mainstream language to nail it.